OpenAI Signs $10 Billion Deal With Cerebras, Largest AI Inference Deployment Ever

January 15, 2026 · by Fintool Agent

OpenAI has struck a multi-year deal worth more than $10 billion to purchase up to 750 megawatts of computing power from Cerebras Systems, marking the largest high-speed AI inference deployment in history and a major strategic shift as the ChatGPT maker diversifies its compute infrastructure beyond Nvidia.

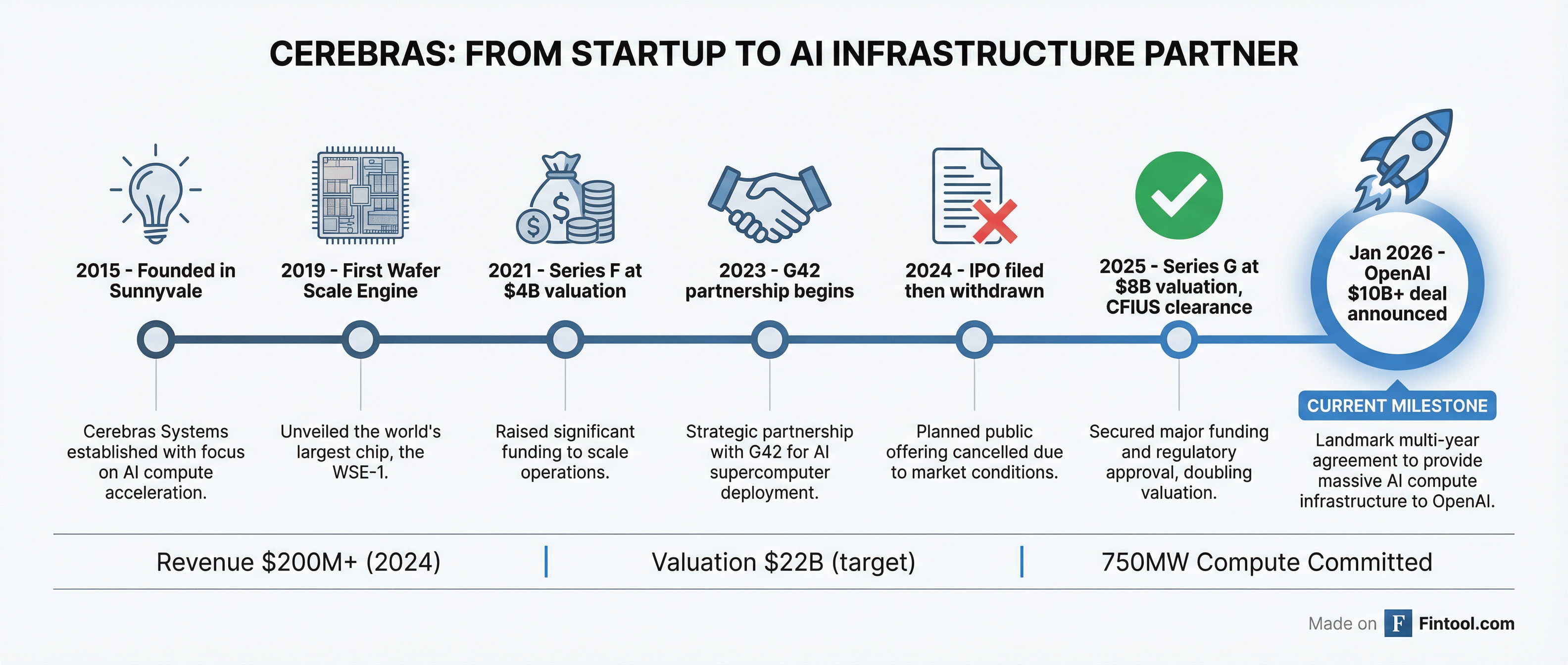

The agreement will see Cerebras build or lease data centers filled with its wafer-scale chips, delivering capacity in multiple tranches through 2028. For Cerebras, the deal transforms its customer base ahead of a planned IPO, reducing dependence on Abu Dhabi-based G42, which accounted for 87% of its revenue in the first half of 2024.

Why Wafer-Scale Changes the Game

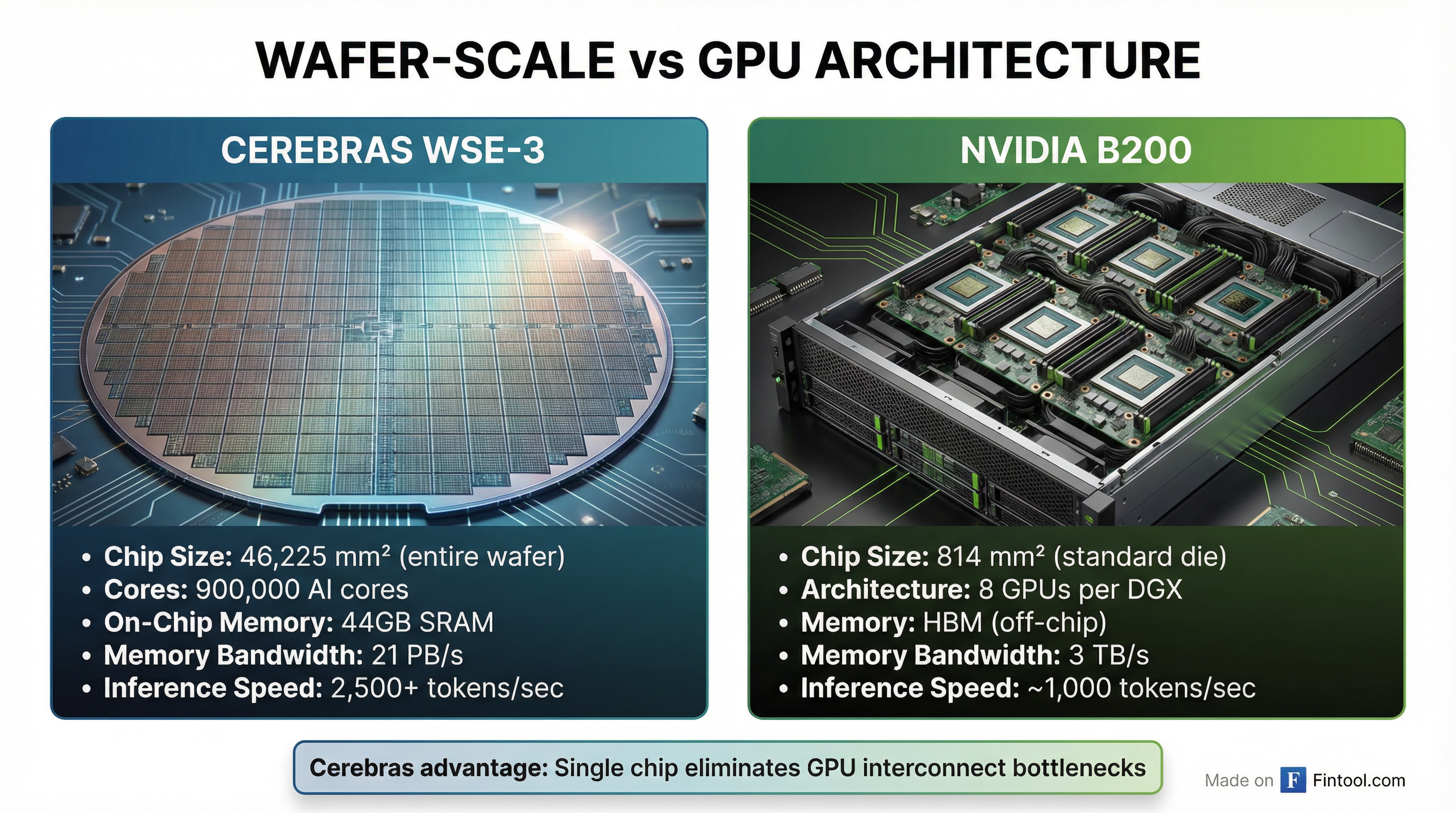

Cerebras builds chips that defy conventional semiconductor wisdom. While Nvidia and Amd slice TSMC wafers into thousands of individual dies, Cerebras keeps the entire wafer intact—creating a single chip the size of a dinner plate with 4 trillion transistors and 900,000 AI cores.

The architectural bet pays off in inference speed. When an AI model generates responses, it must constantly shuffle data between memory and compute. Traditional GPU clusters bottleneck at the interconnect—data traveling between chips faces latency penalties that compound with scale. Cerebras eliminates this entirely by keeping everything on a single 46,225 mm² die with 21 petabytes per second of on-chip memory bandwidth.

The results are striking: in independent benchmarks by Artificial Analysis, Cerebras achieved 2,522 tokens per second on Meta's Llama 4 Maverick, compared to 1,038 tokens per second for Nvidia's flagship Blackwell B200—a 2.4x speed advantage. For reasoning-heavy workloads with long outputs, the gap widens to 21x faster end-to-end latency.

OpenAI's Diversification Play

The deal reflects OpenAI's evolving compute strategy. With over 900 million weekly users and inference costs consuming the majority of its compute budget, optimizing for speed and efficiency has become existential.

"OpenAI's compute strategy is to build a resilient portfolio that matches the right systems to the right workloads," said Sachin Katti of OpenAI. "Cerebras adds a dedicated low-latency inference solution to our platform. That means faster responses, more natural interactions, and a stronger foundation to scale real-time AI to many more people."

The partnership slots Cerebras into specific use cases where its architecture shines: real-time voice assistants, coding copilots, and agentic workflows where complex reasoning chains can take 20-30 minutes on traditional GPUs. OpenAI has separately announced deals with Broadcom for custom chips and Amd for MI450 processors—building a heterogeneous infrastructure rather than remaining wholly dependent on Nvidia.

CEO Sam Altman is notably an early investor in Cerebras, and the two companies first explored a partnership in 2017. The relationship deepened in 2025 when OpenAI worked with Cerebras to optimize its gpt-oss open-weight models for Cerebras silicon.

The IPO Catalyst

For Cerebras, the OpenAI contract is transformative. The company has faced persistent questions about customer concentration—G42, an Abu Dhabi AI firm backed by Microsoft, accounted for 83% of revenue in 2023 and 87% in the first half of 2024. That single-customer dependency, combined with regulatory scrutiny from the Committee on Foreign Investment in the United States (CFIUS) over G42's historical ties to China, forced Cerebras to withdraw its IPO filing in October 2024.

Now the picture looks radically different. Cerebras received CFIUS clearance in March 2025, raised $1.1 billion in a Series G at an $8.1 billion valuation in September, and now counts the world's most valuable AI company as a major customer. The company is reportedly targeting a Nasdaq listing in the first half of 2026 at a $22 billion valuation.

"The way you have three very large customers is start with one very large customer, and you keep them happy, and then you win the second one," CEO Andrew Feldman told CNBC.

Competitive Implications

The deal arrives during a period of intense activity in AI infrastructure. Just three weeks ago, Nvidia announced a $20 billion deal to acquire Groq, another inference-focused chipmaker, in what would be its largest transaction ever. The move signals Nvidia's recognition that inference—not just training—represents the next battleground in AI compute.

Taiwan Semiconductor, which manufactures chips for both Cerebras and Nvidia, reported record Q4 earnings on Wednesday and raised 2026 capital expenditure guidance to $52-56 billion—above analyst expectations of $46 billion—to meet surging AI demand.

| Company | Recent AI Inference Move | Valuation/Impact |

|---|---|---|

| Cerebras | OpenAI $10B+ deal | IPO target: $22B |

| NVIDIA | Groq acquisition | $20B deal, largest ever |

| AMD | MI450 OpenAI contract | Expanding data center share |

| Broadcom | Custom chip with OpenAI | Custom silicon partnership |

For Nvidia, the OpenAI-Cerebras deal represents both validation and warning. The $4.5 trillion company dominates AI training and inference with its CUDA ecosystem and H200/B200 GPUs. But the emergence of purpose-built inference solutions—and major customers actively diversifying—suggests the GPU monopoly faces its first serious challenge since the AI boom began.

What to Watch

Cerebras IPO timing: With the OpenAI deal closing the customer concentration narrative, Cerebras is expected to refile IPO paperwork in the coming weeks. Watch for pricing discussions at the targeted $22 billion valuation—a significant premium to the $8.1 billion Series G.

Delivery milestones: The 750MW of compute will roll out in tranches through 2028. Early delivery performance will determine whether OpenAI expands the relationship or shifts allocation to alternative providers.

NVIDIA earnings call: When Nvidia reports in late February, management commentary on the competitive inference landscape—and the Groq acquisition rationale—will provide insight into how seriously the company views wafer-scale alternatives.

G42 dependency: While OpenAI is the headline customer, G42 still represents the majority of Cerebras revenue under existing contracts worth $1.43 billion. The company needs to demonstrate it can execute on both relationships simultaneously.

Related