West Virginia Sues Apple Over Child Abuse Material, Citing Internal Admission as 'Greatest Platform for Distributing Child Porn'

February 19, 2026 · by Fintool Agent

West Virginia Attorney General JB McCuskey filed a first-of-its-kind government lawsuit against Apple on Thursday, alleging the $3.8 trillion tech giant knowingly allowed its iCloud platform to become a vehicle for distributing child sexual abuse material (CSAM)—and citing explosive internal communications in which Apple described itself as the "greatest platform for distributing child porn."

The consumer protection complaint, filed in the Circuit Court of Mason County, accuses Apple of prioritizing user privacy and its business interests over child safety for years, even as competitors like Google, Meta, and Microsoft deployed industry-standard detection tools to combat the spread of illegal content.

Apple shares fell 1.4% to $260.55 on Thursday amid broader market weakness, though the stock's reaction to the lawsuit appeared muted as investors weighed the early-stage nature of the legal proceedings.

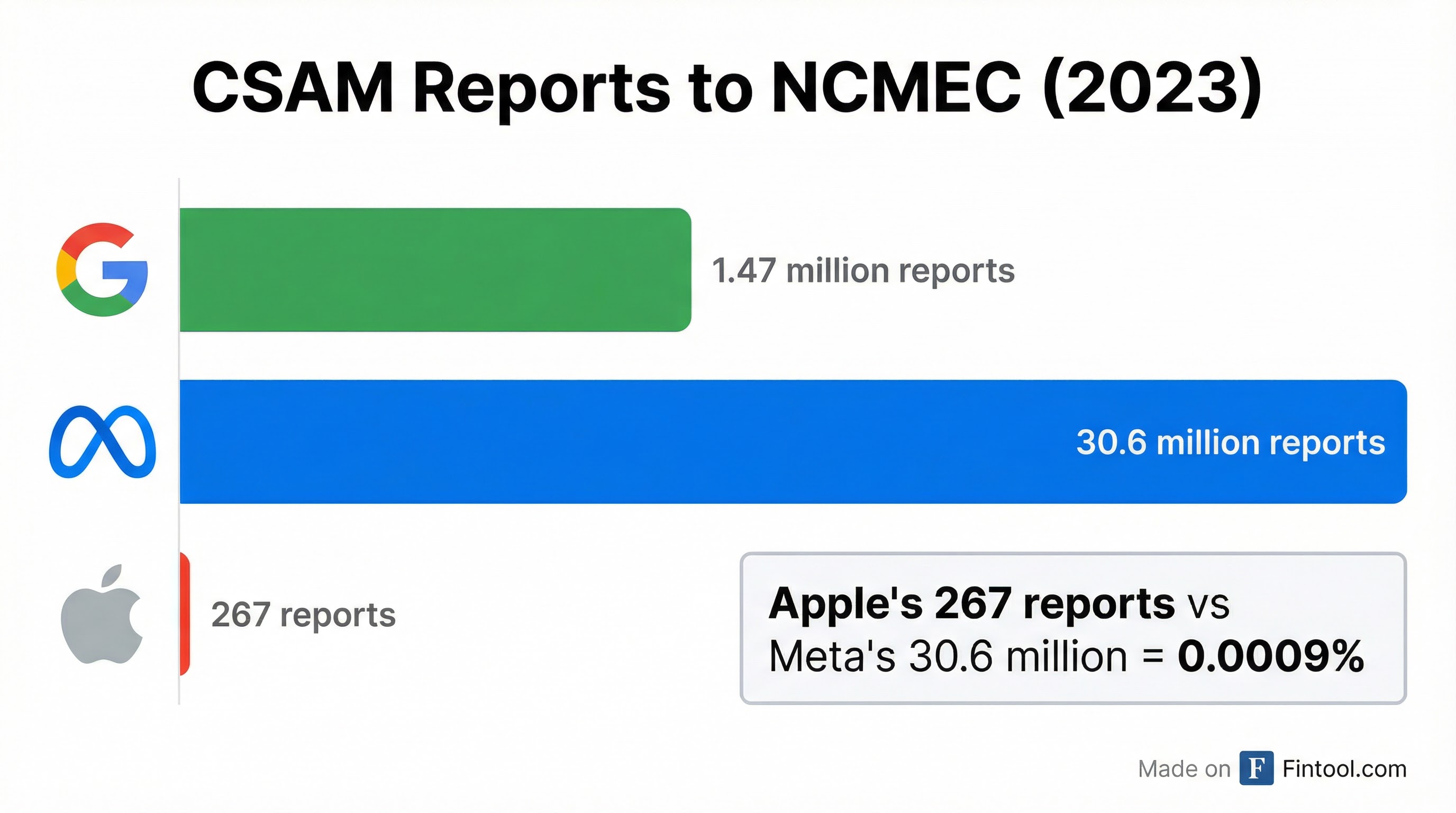

The Stark Reality: 267 vs. 30.6 Million Reports

Federal law requires all U.S.-based technology companies to report detected CSAM to the National Center for Missing and Exploited Children (NCMEC). The disparity between Apple and its peers is staggering.

In 2023, Apple made just 267 CSAM reports to NCMEC. By comparison:

| Company | CSAM Reports (2023) | Apple Multiple |

|---|---|---|

| Meta | 30.6 million | 114,607x |

| 1.47 million | 5,506x | |

| Apple | 267 | 1x |

"Preserving the privacy of child predators is absolutely inexcusable," Attorney General McCuskey said. "Since Apple has so far refused to police themselves and do the morally right thing, I am filing this lawsuit to demand Apple follow the law, report these images, and stop re-victimizing children."

Apple's Abandoned Promise

The lawsuit arrives more than four years after Apple announced—then abandoned—a feature designed to detect CSAM on its platforms.

August 2021: Apple unveiled plans for a privacy-preserving CSAM detection tool called NeuralHash that would scan iCloud Photos for matches against known abuse images, using cryptographic techniques to preserve user privacy.

September 2021: Following backlash from digital rights groups including the Electronic Frontier Foundation and over 90 civil society organizations worldwide, Apple paused the rollout. Critics warned the technology could be exploited by authoritarian governments for surveillance and censorship.

December 2022: Apple quietly confirmed it had permanently abandoned the CSAM scanning feature, citing concerns that "scanning users' privately stored iCloud data" could not be done "without ultimately imperiling the security and privacy of our users."

Instead, Apple pivoted to "Communication Safety" features—on-device tools that warn children and blur images when nudity is detected in Messages, FaceTime, and AirDrop. Critics argue these features are insufficient because they don't detect or report known CSAM images.

The Privacy Defense Under Fire

Apple has built its brand identity around privacy, with CEO Tim Cook repeatedly emphasizing that "privacy is a fundamental human right." The company touts end-to-end encryption and on-device processing as key differentiators from data-hungry competitors.

In its FY 2025 10-K filing, Apple disclosed that it faces "new and changing laws and regulations regarding online safety, including enhanced protections for minors and mandatory age verification requirements" that could require "significant modifications to the Company's products, services and operations."

Apple's response to the lawsuit emphasized its alternative approach. "At Apple, protecting the safety and privacy of our users, especially children, is central to what we do," a spokesperson told media. The company pointed to its Communication Safety feature, which "automatically intervenes on kids' devices when nudity is detected."

But Attorney General McCuskey rejected this framing during a press conference Thursday: "There is a social construct that dictates that you also have to be part of solving these large-scale problems, and one of those problems is the proliferation and exploitation of children in this country."

Industry Standard: PhotoDNA

The lawsuit specifically criticizes Apple for refusing to implement PhotoDNA, the industry-standard CSAM detection technology developed by Microsoft and Dartmouth College in 2009.

PhotoDNA uses "hashing and matching" to automatically identify known CSAM images by comparing digital fingerprints against a database maintained by NCMEC and law enforcement. Microsoft provides the technology free to qualified organizations, including tech companies.

The complaint alleges Apple's abandoned NeuralHash tool was "far inferior" to PhotoDNA, and that the company's real motivation for shelving it was to avoid the operational burden and reputational risk of detecting abuse material on its platform.

Legal Pressure Mounts

The West Virginia lawsuit adds to an already crowded legal docket for Apple, which disclosed in its FY 2025 10-K that it faces:

- DOJ Antitrust Lawsuit: Civil litigation alleging monopolization in "performance smartphones" and "smartphones" markets, filed March 2024

- Epic Games Dispute: A California court found Apple in violation of an injunction requiring external payment options, referred the company to the U.S. Attorney for potential criminal contempt proceedings in April 2025

- EU DMA Investigations: €500 million fine in April 2025 for restricting developer steering, with additional investigations ongoing that could result in fines up to 10% of worldwide revenue

| Legal Matter | Status | Potential Impact |

|---|---|---|

| DOJ Antitrust | Active | Equitable relief, behavioral remedies |

| Epic Games Contempt | Referred to U.S. Attorney | Criminal contempt, forced platform changes |

| EU DMA Article 5(4) | €500M fine, appealed | Business practice changes |

| EU DMA Article 6(4) | Preliminary findings | Up to 10% of global revenue |

| West Virginia CSAM | Filed Feb 2026 | Statutory/punitive damages, injunctive relief |

The company cautioned that "resolution of legal matters in a manner inconsistent with management's expectations could have a material impact on the Company's financial condition and operating results."

What's at Stake

If West Virginia prevails, the lawsuit could force Apple to fundamentally redesign how it handles user content—potentially requiring the implementation of CSAM scanning technology across its ecosystem.

The state is seeking:

- Statutory and punitive damages

- Injunctive relief requiring Apple to implement effective CSAM detection

- Equitable remedies mandating safer product design

For investors, the lawsuit represents another front in the expanding regulatory and legal pressure on Big Tech's handling of user content. While the immediate financial impact may be limited, a successful case could establish precedent for similar actions by other states and create ongoing compliance costs.

Apple's FY 2025 revenue of $395 billion is driven increasingly by services ($100 billion annually), with iCloud a key component of that ecosystem. Any requirement to scan iCloud content could affect user adoption and the company's carefully cultivated privacy brand.

What to Watch

Near-term: Apple's response to the lawsuit and whether other state attorneys general follow West Virginia's lead. Republican AGs in particular have been aggressive on Big Tech child safety issues.

Medium-term: Court proceedings in Mason County and any discovery that surfaces additional internal communications about Apple's CSAM decisions.

Long-term: Whether this lawsuit—combined with EU pressure and antitrust scrutiny—forces Apple to reconsider its position on content scanning, potentially unraveling its privacy-centric brand positioning.

Apple declined to comment beyond its initial statement. The company's stock closed at $260.55, down 1.4% on the day.

Related: Apple Inc. Company Profile · Google Company Profile · Meta Platforms Company Profile · Microsoft Company Profile